WavLM-Base

Microsoft’s WavLM

The base model pretrained on 16kHz sampled speech audio. When using the model, make sure that your speech input is also sampled at 16kHz.

Note: This model does not have a tokenizer as it was pretrained on audio alone. In order to use this model speech recognition, a tokenizer should be created and the model should be fine-tuned on labeled text data. Check out this blog for more in-detail explanation of how to fine-tune the model.

The model was pre-trained on 960h of Librispeech.

Paper: WavLM: Large-Scale Self-Supervised Pre-Training for Full Stack Speech Processing

Authors: Sanyuan Chen, Chengyi Wang, Zhengyang Chen, Yu Wu, Shujie Liu, Zhuo Chen, Jinyu Li, Naoyuki Kanda, Takuya Yoshioka, Xiong Xiao, Jian Wu, Long Zhou, Shuo Ren, Yanmin Qian, Yao Qian, Jian Wu, Michael Zeng, Furu Wei

Abstract

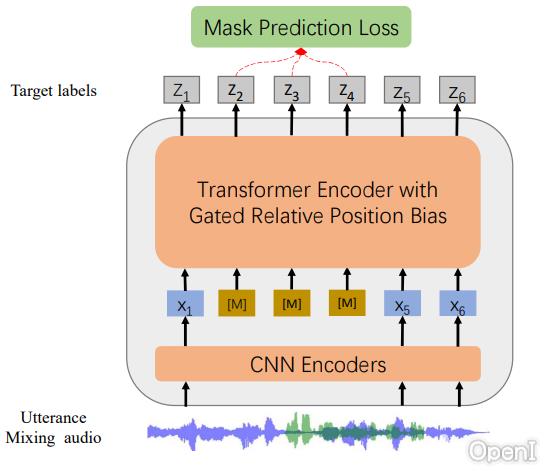

Self-supervised learning (SSL) achieves great success in speech recognition, while limited exploration has been attempted for other speech processing tasks. As speech signal contains multi-faceted information including speaker identity, paralinguistics, spoken content, etc., learning universal representations for all speech tasks is challenging. In this paper, we propose a new pre-trained model, WavLM, to solve full-stack downstream speech tasks. WavLM is built based on the HuBERT framework, with an emphasis on both spoken content modeling and speaker identity preservation. We first equip the Transformer structure with gated relative position bias to improve its capability on recognition tasks. For better speaker discrimination, we propose an utterance mixing training strategy, where additional overlapped utterances are created unsupervisely and incorporated during model training. Lastly, we scale up the training dataset from 60k hours to 94k hours. WavLM Large achieves state-of-the-art performance on the SUPERB benchmark, and brings significant improvements for various speech processing tasks on their representative benchmarks.

The original model can be found under https://github.com/microsoft/unilm/tree/master/wavlm.

Usage

This is an English pre-trained speech model that has to be fine-tuned on a downstream task like speech recognition or audio classification before it can be

used in inference. The model was pre-trained in English and should therefore perform well only in English. The model has been shown to work well on the SUPERB benchmark.

Note: The model was pre-trained on phonemes rather than characters. This means that one should make sure that the input text is converted to a sequence

of phonemes before fine-tuning.

Speech Recognition

To fine-tune the model for speech recognition, see the official speech recognition example.

Speech Classification

To fine-tune the model for speech classification, see the official audio classification example.

Speaker Verification

TODO

Speaker Diarization

TODO

Contribution

The model was contributed by cywang and patrickvonplaten.

License

The official license can be found here

数据评估

本站OpenI提供的microsoft/wavlm-base都来源于网络,不保证外部链接的准确性和完整性,同时,对于该外部链接的指向,不由OpenI实际控制,在2023年 5月 26日 下午6:01收录时,该网页上的内容,都属于合规合法,后期网页的内容如出现违规,可以直接联系网站管理员进行删除,OpenI不承担任何责任。